Data Science

This is a protected page.

Contents

- 1 Projects portfolio

- 2 Data Analytics courses

- 3 Possible sources of data

- 4 What is data

- 5 What is Data Science

- 6 Styles of Learning - Types of Machine Learning

- 7 Some real-world examples of big data analysis

- 8 Statistic

- 9 Descriptive Data Analysis

Projects portfolio

-

Try the App at http://dashboard.sinfronteras.ws

-

Github repository: https://github.com/adeloaleman/AmazonLaptopsDashboard

-

Visit the Web App at http://www.vglens.sinfronteras.ws

-

This Application was developed using Python-Django Web framework

-

Visit the Web App at http://62.171.143.243

-

Github repository: https://github.com/adeloaleman/WebApp-CloneOfTwitter

-

This Application was developed using:

-

Back-end: Node.js (Express) (TypeScript)

-

Front-end: React (TypeScript)

-

Visit the Web App at http://fakenewsdetector.sinfronteras.ws

-

Github repository https://github.com/adeloaleman/RFakeNewsDetector

Data Analytics courses

Data Science courses

- Posts

- Top 50 Machine Learning interview questions: https://www.linkedin.com/posts/mariocaicedo_machine-learning-interviews-activity-6573658058562555904-CzeV

- https://www.linkedin.com/feed/update/urn:li:ugcPost:6547849699011977216/

- Udemy: https://www.udemy.com/

- Python for Data Science and Machine Learning Bootcamp - Nivel básico

- Machine Learning, Data Science and Deep Learning with Python - Nivel básico - Parecido al anterior

- Data Science: Supervised Machine Learning in Python - Nivel más alto

- Mathematical Foundation For Machine Learning and AI

- The Data Science Course 2019: Complete Data Science Bootcamp

- Coursera - By Stanford University

- Udacity: https://eu.udacity.com/

- Columbia University - COURSE FEES USD 1,400

Possible sources of data

What is data

It is difficult to define such a broad concept, but the definition that I like it that data is a collection (or any set) of characters or files, such as numbers, symbols, words, text files, images, files, audio files, etc, that represent measurements, observations, or just descriptions, that are gathered and stored for some purpose. https://www.mathsisfun.com/data/data.html https://www.computerhope.com/jargon/d/data.htm

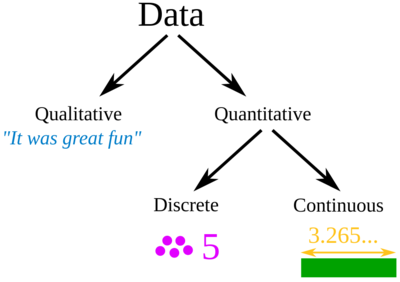

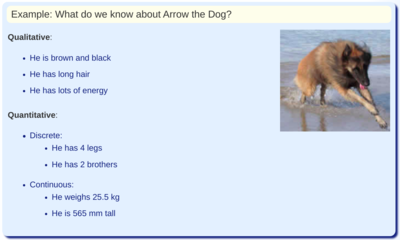

Qualitative vs quantitative data

https://learn.g2.com/qualitative-vs-quantitative-data

| Qualitative data | Quantitative data |

|---|---|

| Qualitative data is descriptive and conceptual information (it describes something) | Quantitative data is numerical information (numbers) |

| It is subjective, interpretive, and exploratory | It is objective, to-the-point, and conclusive |

| It is non-statistical | It is statistical |

| It is typically unstructured or semi-structured. | It is typically structured |

| Examples:

See unstructured data examples below. |

Examples:

See structured data examples below. |

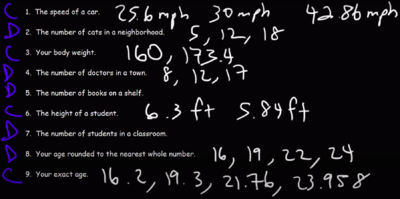

Discrete and continuous data

https://www.youtube.com/watch?v=cz4nPSA9rlc

Quantitative data can be discrete or continuous.

- Continuous data can take on any value in an interval.

- We usually say that continuous data is measured.

- Examples:

- Measurements of temperature: ºF.

- Temperature can be any value within an interval and it is measured (not counted)

- Discrete data can only have specific values.

- We usually say that discrete data is counted.

- Discrete data is usually (but not always) whole numbers:

- Examples:

- Possible values on a Dice Roller:

- Shoe sizes: . They are not whole numbers but can not be any number.

-

Taken from https://www.youtube.com/watch?v=cz4nPSA9rlc

Taken from https://www.youtube.com/watch?v=cz4nPSA9rlc -

Taken from https://www.youtube.com/watch?v=cz4nPSA9rlc

Taken from https://www.youtube.com/watch?v=cz4nPSA9rlc

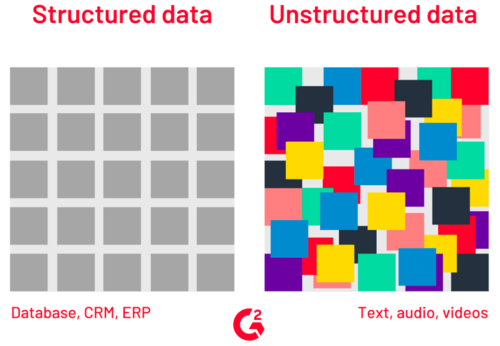

Structured vs Unstructured data

https://learn.g2.com/structured-vs-unstructured-data

http://troindia.in/journal/ijcesr/vol3iss3/36-40.pdf

| Structured data | Unstructured data | Semi-structured data |

|---|---|---|

| Structured data is organized within fixed fields or columns, usually in relational databases (or spreadsheets) so it can be easily queried with SQL

https://learn.g2.com/structured-vs-unstructured-data https://www.talend.com/resources/structured-vs-unstructured-data |

It's data that doesn't fit easily into a spreadsheet or a relational database. | The line between Semi-structured data and Unstructured data has always been unclear. Semi-structured data is usually referred to as information that is not structured in a traditional database but contains some organizational properties that make its processing easier. |

|

|

For example, NoSQL documents are considered to be semi-structured data since they contain keywords that can be used to process the documents easier. https://www.youtube.com/watch?v=dK4aGzeBPkk |

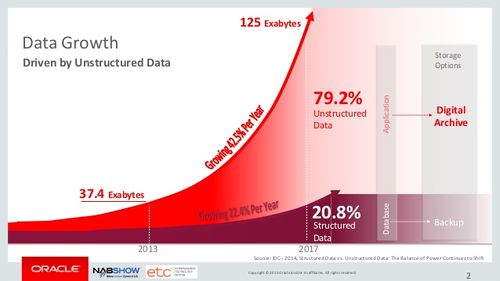

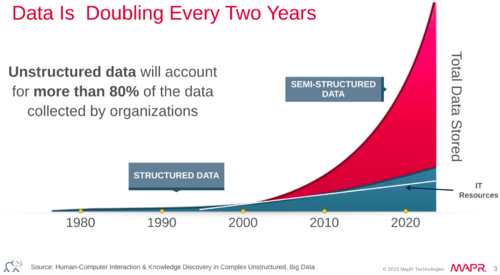

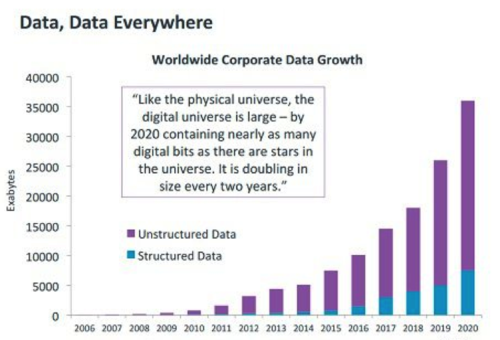

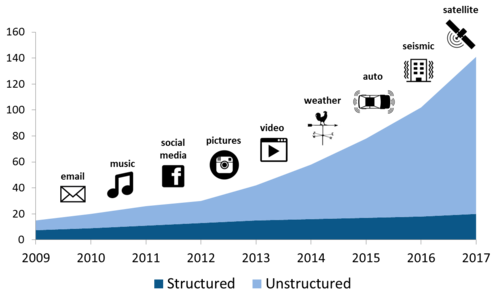

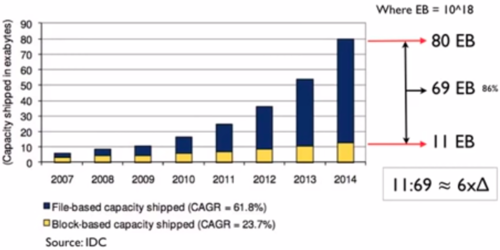

It is important to highlight that the huge increase in data in the last 10 years has been driven by the increase in unstructured data. Currently, some estimations indicate that there are around 300 exabytes of data, of which around 80% is unstructured data.

The prefix exa indicates multiplication by the sixth power of 1000 ().

Some sources also suggest that the amount of data is doubling every 2 years.

-

Source: IDC. Taken from https://www.youtube.com/watch?v=WBU7sW1jy2o

Source: IDC. Taken from https://www.youtube.com/watch?v=WBU7sW1jy2o

Data Levels and Measurement

Levels of Measurement - Measurement scales

https://www.statisticssolutions.com/data-levels-and-measurement/

There are four Levels of Measurement in research and statistics: Nominal, Ordinal, Interval, and Ratio.

In Practice:

- Most schemes accommodate just two levels of measurement: nominal and ordinal

- There is one special case: dichotomy (otherwise known as a "boolean" attribute)

| Values have meaningful order | Distance between values is defined | Mathematical operations make sense

(Values can be used to perform mathematical operations) |

There is a meaning ful zero-point | Values can be used to perform statistical computations | Example | |||||||||||||||||||||||||||||||||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| Comparison operators | Addition and subtrac tion | Multiplica tion and division | "Counts", aka, "Fre quency of Distribu tion" | Mode | Median | Mean | Std | |||||||||||||||||||||||||||||||||||||||

| Nominal | Values serve only as labels. Also called "categorical", "enumerated", or "discrete". However, "enumerated" and "discrete" imply order |

|

For an «outlook» attribute from weather data, potential values could be "sunny", "overcast", and "rainy". | |||||||||||||||||||||||||||||||||||||||||||

| Ordinal | Ordinal attributes are called "numeric", or "continuous", however "continuous" implies mathematical continuity |

|

A «temperature» attribute in weather data with potential values fo: "hot" > "warm" > "cool" | |||||||||||||||||||||||||||||||||||||||||||

| Interval |

|

a «Temperature» attribute composed by numeric measures of such property | ||||||||||||||||||||||||||||||||||||||||||||

| Ratio |

|

The «weight» (e.g., in pounds)

Other examples: gross sales and income of a company. | ||||||||||||||||||||||||||||||||||||||||||||

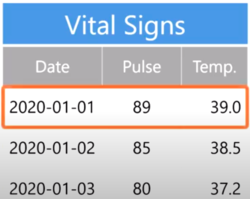

What is an example

An example, also known in statistics as an observation, is an instance of the phenomenon that we are studying. An observation is characterized by one or a set of attributes (variables).

In data science, we record observations on the rows of a table.

For example, imaging that we are recording the vital signs of a patient. For each observation we would record the «date of the observation», the «patient's heart» rate, and the «temperature»

What is a dataset

[Noel Cosgrave slides]

- A dataset is typically a matrix of observations (in rows) and their attributes (in columns).

- It is usually stored as:

- Flat-file (comma-separated values (CSV)) (tab-separated values (TSV)). A flat file can be a plain text file or a binary file.

- Spreadsheets

- Database table

- It is by far the most common form of data used in practical data mining and predictive analytics. However, it is a restrictive form of input as it is impossible to represent relationships between observations.

What is Metadata

Metadata is information about the background of the data. It can be thought of as "data about the data" and contains: [Noel Cosgrave slides]

- Description of the variables.

- Information about the data types for each variable in the data.

- Restrictions on values the variables can hold.

What is Data Science

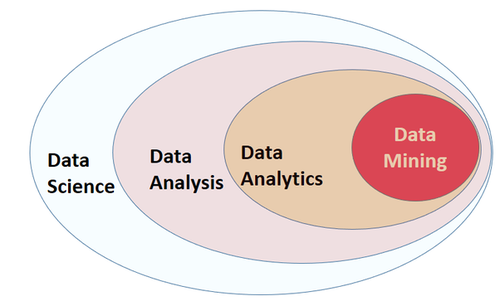

There are many different terms that are related and sometimes even used as synonyms. It is actually hard to define and differentiate all these related disciplines such as:

Data Science - Data Analysis - Data Analytics - Predictive Data Analytics - Data Mining - Machine Learning - Big Data - AI and even more - Business Analytics.

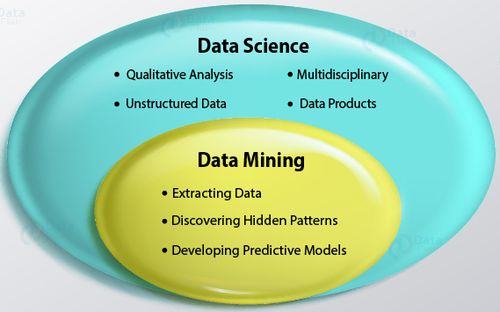

Data Science

I think the broadest term is Data Sciences. Data Sciences is a very broad discipline (an umbrella term) that involves (encompasses) many subsets such as Data Analysis, Data Analytics, Data Mining, Machine Learning, Big data (could also be included), and several other related disciplines.

A general definition could be that Data Science is a multi-disciplinary field that uses aspects of statistics, computer science, applied mathematics, data visualization techniques, and even business analysis, with the goal of getting new insights and new knowledge (uncovering useful information) from a vast amount of data, that can help in deriving conclusion and usually in taking business decisions http://makemeanalyst.com/what-is-data-science/ https://www.loginworks.com/blogs/top-10-small-differences-between-data-analyticsdata-analysis-and-data-mining/

Data analysis

Data analysis is still a very broad process that includes many multi-disciplinary stages, it goes from: (that are not usually related to Data Analytics or Data Mining)

- Defining a Business objective

- Data collection (Extracting the data)

- Data Storage

- Data Integration: Multiple data sources are combined. http://troindia.in/journal/ijcesr/vol3iss3/36-40.pdf

- Data Transformation: The data is transformed or consolidated into forms that are appropriate or valid for mining by performing various aggregation operations. http://troindia.in/journal/ijcesr/vol3iss3/36-40.pdf

- Data cleansing, Data modeling, Data mining, and Data visualizing, with the goal of uncovering useful information that can help in deriving conclusions and usually in taking business decisions. [EDUCBA] https://www.loginworks.com/blogs/top-10-small-differences-between-data-analyticsdata-analysis-and-data-mining/

- This is the stage where we could use Data mining and ML techniques.

- Optimisation: Making the results more precise or accurate over time.

Data Mining (Data Analytics - Predictive Data Analytics) (Hasta ahora creo que estos términos son prácticamente lo mismo)

We can say that Data Mining is a Data Analysis subset. It's the process of (1) Discovering hidden patterns in data and (2) Developing predictive models, by using statistics, learning algorithms, and data visualization techniques.

Common methods in data mining are: See Styles of Learning - Types of Machine Learning section

Big Data

Big data describes a massive amount of data that has the potential to be mined for information but is too large to be processed and analyzed using traditional data tools.

Machine Learning

Al tratar de encontrar una definición para Machine Learning me di cuanta de que muchos expertos coinciden en que no hay una definición standard para ML.

En este post se explica bien la definición de ML: https://machinelearningmastery.com/what-is-machine-learning/

Estos vídeos también son excelentes para entender what ML is:

- https://www.youtube.com/watch?v=f_uwKZIAeM0

- https://www.youtube.com/watch?v=ukzFI9rgwfU

- https://www.youtube.com/watch?v=WXHM_i-fgGo

- https://www.coursera.org/lecture/machine-learning/what-is-machine-learning-Ujm7v

Una de las definiciones más citadas es la definición de Tom Mitchell. This author provides in his book Machine Learning a definition in the opening line of the preface:

Tom Mitchell

The field of machine learning is concerned with the question of how to construct computer programs that automatically improve with experience.

So, in short we can say that ML is about writing computer programs that improve themselves.

Tom Mitchell also provides a more complex and formal definition:

Tom Mitchell

A computer program is said to learn from experience E with respect to some class of tasks T and performance measure P, if its performance at tasks in T, as measured by P, improves with experience E.

Don't let the definition of terms scare you off, this is a very useful formalism. It could be used as a design tool to help us think clearly about:

- E: What data to collect.

- T: What decisions the software needs to make.

- P: How we will evaluate its results.

Suppose your email program watches which emails you do or do not mark as spam, and based on that learns how to better filter spam. In this case: https://www.coursera.org/lecture/machine-learning/what-is-machine-learning-Ujm7v

- E: Watching you label emails as spam or not spam.

- T: Classifying emails as spam or not spam.

- P: The number (or fraction) of emails correctly classified as spam/not spam.

Machine Learning and Data Mining are terms that overlap each other. This is logical because both use the same techniques (weel, many of the techniques uses in Data Mining are also use in ML). I'm talking about Supervised and Unsupervised Learning algorithms (that are also called Supervised ML and Unsupervised ML algorithms).The difference is that in ML we want to construct computer programs that automatically improve with experience (computer programs that improve themselves)

We can, for instance, use a Supervised learning algorithm (Naive Bayes, for example) to build a model that, for example, classifies emails as spam or no-spam. So we can use labeled training data to build the classifier and then use it to classify unlabeled data.

So far, even if this classifier is usually called an ML classifier, it is NOT strictly a ML program. It is just a Data Mining or Predictive data analytics task. It's not a strict ML program because the classifier is not automatically improving itself with experience.

Now, if we are able to use this classifier to develop a program that automatically gathers and adds more training data to rebuild the classifier and updates the classifier when its performance improves, this would now be a strict ML program; because the program is automatically gathering new training data and updating the model so it will automatically improve its performance.

Styles of Learning - Types of Machine Learning

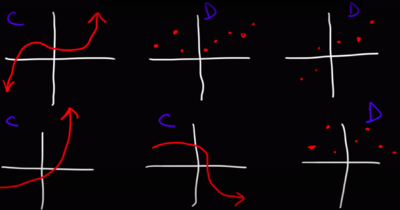

Supervised Learning

Supervised Learning (Supervised ML):

https://en.wikipedia.org/wiki/Supervised_learning https://machinelearningmastery.com/supervised-and-unsupervised-machine-learning-algorithms/

Supervised learning is the process of using training data (training data/labeled data: input (x) - output (y) pairs) to produce a mapping function () that allows mapping from the input variable () to the output variable (). The training data is composed of input (x) - output (y) pairs.

Put more simply, in Supervised learning we have input variables () and an output variable () and we use an algorithm that is able to produce an inferred mapping function from the input to the output.

The goal is to approximate the mapping function so well that when we have new input data (), we can predict the output variable .

The dependent variable is the variable that is to be predicted (). An independent variable is the variable or variables that is used to predict or explain the dependent variable ().

It is not so easy to see and understand the mathematical conceptual difference between Regression and Classification techniques. In both methods, we determine a function from an input variable to an output variable. It is clear that regressions methods predict continuous variables (the output variable is continuous), and classification predicts discrete variables. Now, if we think about the mathematical conceptual difference, we must notice that regression is estimating the mathematical function that most closely fits the data. In some classification methods, it is clear that we are not estimating a mathematical function that fits the data, but just a method/algorithm/mapping_function (no sé cual sería el término más adecuado) that allows us to map the input to the output. This is, for example, clear in K-Nearest Neighbors where the algorithm doesn't generate a mathematical function that fits the data but only a mapping function (de nuevo, no sé si éste sea el mejor término) that actually (in the case of KNN) relies on the data (KNN determines the class of a given unlabeled observation by identifying the k-nearest labeled observations to it). So, the mapping function obtained in KNN is attached to the training data. In this case, is clear that KNN is not returning a mathematical function that fits the data. In Naïve Bayes, the mapping function obtained is not attached to the data. That is to say, when we use the mapping function generate by NB, this doesn't require the training data (of course we require the training data to build the NB Mapping function, but not to apply the generated function to classify a new unlabeled observation which is the case of KNN). However, we can see that the mathematical concept behind NB is not about finding a mathematical function that fits the data but it relies on a probabilistic approach (tengo que analizar mejor lo último que he dicho aquí sobre NB). Now, when it comes to an algorithm like Decision Trees, it is not so clear to see and understand the mathematical conceptual difference between Regression and Classification. in DT, I think that (even if the output is a discrete variable) we are generating a mathematical function that fits the data. I can see that the method of doing so is not so clear as in the case of, for example, Linear regression, but it would in the end be a mathematical function that fits the data. I think this is why, by doing simples variation in the algorithms, decision trees can also be used as a regression method.

De hecho, Regression and classification methods are so closely related that:

- Some algorithms can be used for both classification and regression with small modifications, such as Decision trees, SVM, and Artificial neural networks. https://machinelearningmastery.com/classification-versus-regression-in-machine-learning/

- A regression algorithm may predict a discrete value, but the discrete value in the form of an integer quantity. https://machinelearningmastery.com/classification-versus-regression-in-machine-learning/

- A classification algorithm may predict a continuous value, but the continuous value is in the form of a probability for a class label.

- Logistic Regression: Contrary to popular belief, logistic regression IS a regression model. The model builds a regression model to predict the probability that a given data entry belongs to the category numbered as "1". Just like Linear regression assumes that the data follows a linear function, Logistic regression models the data using the sigmoid function. https://www.geeksforgeeks.org/understanding-logistic-regression/

- There are methods for implementing Regression using classification algorithms:

- Regression techniques (Correlation methods)

- A regression algorithm is able to approximate a mapping function () from input variables () to a continuous output variable (). https://machinelearningmastery.com/classification-versus-regression-in-machine-learning/

- For example, the price of a house may be predicted using regression techniques.

- Quizá podríamos decir que Regression analysis is the process of finding the mathematical function that most closely fits the data. The most common form of regression analysis is linear regression, which is the process of finding the line that most closely fits the data.

- The purpose of regression analysis is to: [Noel]

- Predict the value of the dependent variable as a function of the value(s) of at least one independent variable.

- Explain how changes in an independent variable are manifested in the dependent variable

- Linear Regression

- Decision Tree Regression

- Support Vector Machines (SVM): It can be used for classification and regression analysis

- Neural Network Regression

- Regression algorithms are used for:

- Prediction of continuous variables: future prices/cost, incomes, etc.

- Housing Price Prediction: For example, a regression model could be used to predict the value of a house based on location, number of rooms, lot size, and other factors.

- Weather forecasting: For example. A «temperature» attribute of weather data.

- Classification techniques

- Classification is the process of identifying to which of a set of categories a new observation belongs. https://en.wikipedia.org/wiki/Statistical_classification

- A classification algorithm is able to approximate a mapping function () from input variables () to a discrete output variable (). So, the mapping function predicts the class/label/category of a given observation. https://machinelearningmastery.com/classification-versus-regression-in-machine-learning/

- For example, an email can be classified as "spam" or "not spam".

- K-Nearest Neighbors

- Decision Trees

- Random Forest

- Naive Bayes

- Logistic Regression

- Support Vector Machines (SVM): It can be used for classification and regression analysis

- Neural Network Classification

- ...

- Classification algorithms are used for:

- Text/Image classification

- Medical Diagnostics

- Weather forecasting: For example. An «outlook» attribute of weather data with potential values of "sunny", "overcast", and "rainy"

- Fraud Detection

- Credit Risk Analysis

Unsupervised Learning

Unsupervised Learning (Unsupervised ML)

- Clustering

- It is the task of dividing the data into groups that contain similar data (grouping data that is similar together).

- For example, in a Library, We can use clustering to group similar books together, so customers that are interested in a particular kind of book can see other similar books

- K-Means Clustering

- Mean-Shift Clustering

- Density-based spatial clustering of applications with noise (DBSCAN)

- Clustering methods are used for:

- Recommendation Systems: Recommendation systems are designed to recommend new items to users/customers based on previous user's preferences. They use clustering algorithms to predict a user's preferences based on the preferences of other users in the user's cluster.

- For example, Netflix collects user-behavior data from its more than 100 million customers. This data helps Netflix to understand what the customers want to see. Based on the analysis, the system recommends movies (or tv-shows) that users would like to watch. This kind of analysis usually results in higher customer retention. https://www.youtube.com/watch?v=dK4aGzeBPkk

- Customer Segmentation

- Targeted Marketing

- Dimensionally reduction

- Dimensionally reduction methods are used for:

- Big Data Visualisation

- Meaningful compression

- Structure Discovery

- Association Rules

Reinforcement Learning

Some real-world examples of big data analysis

- Credit card real-time data:

- Credit card companies collect and store the real-time data of when and where the credit cards are being swiped. This data helps them in fraud detection. Suppose a credit card is used at location A for the first time. Then after 2 hours the same card is being used at location B which is 5000 kilometers from location A. Now it is practically impossible for a person to travel 5000 kilometers in 2 hours, and hence it becomes clear that someone is trying to fool the system. https://www.youtube.com/watch?v=dK4aGzeBPkk

Statistic

- Probability vs Likelihood: https://www.youtube.com/watch?v=pYxNSUDSFH4

Descriptive Data Analysis

Rather than find hidden information in the data, descriptive data analysis looks to summarize the dataset.

- Some of the measures commonly included in descriptive data analysis:

- Central tendency: Mean, Mode, Media

- Variability (Measures of variation): Range, Quartile, Standard deviation, Z-Score

- Shape of distribution: Probabilistic distribution plot, Histogram, Skewness, Kurtosis

Central tendency

https://statistics.laerd.com/statistical-guides/measures-central-tendency-mean-mode-median.php

A central tendency (or measure of central tendency) is a single value that attempts to describe a variable by identifying the central position within that data (the most typical value in the data set).

The mean (often called the average) is the most popular measure of the central tendency, but there are others, such as the median and the mode.

The mean, median, and mode are all valid measures of central tendency, but under different conditions, some measures of central tendency are more appropriate to use than others.

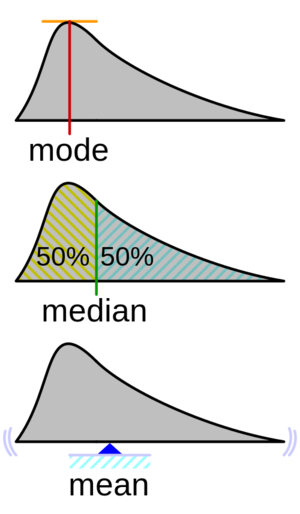

File:Visualisation_mode_median_mean.svg

Mean

The mean (or average) is the most popular measure of central tendency.

The mean is equal to the sum of all the values in the data set divided by the number of values in the data set.

The mean is usually denotated as (population mean) or (pronounced x bar) (sample mean):

An important property of the mean is that it includes every value in your data set as part of the calculation. In addition, the mean is the only measure of central tendency where the sum of the deviations of each value from the mean is always zero.

When not to use the mean

When the data has values that are unusual (too small or too big) compared to the rest of the data set (outliers) the mean is usually not a good measure of the central tendency.

For example, consider the wages of the employees in a factory:

| Staff | 1 | 2 | 3 | 4 | 5 | 6 | 7 | 8 | 9 | 10 |

|---|---|---|---|---|---|---|---|---|---|---|

| Salary |

The mean salary for these ten employees is $30.7k. However, inspecting the data we can see that this mean value might not be the best way to accurately reflect the typical salary of an employee, as most workers have salaries in a range between $12k to 18k. The mean is being skewed by the two large salaries. As we will find out later, taking the median would be a better measure of central tendency in this situation.

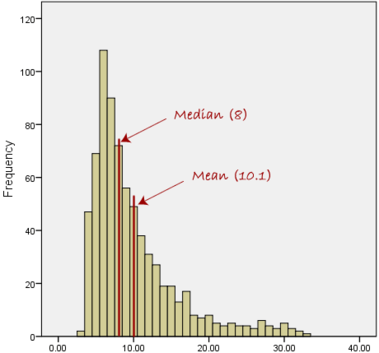

Another case when we usually prefer the median over the mean (or mode) is when our data is skewed (i.e., the frequency distribution for our data is skewed).

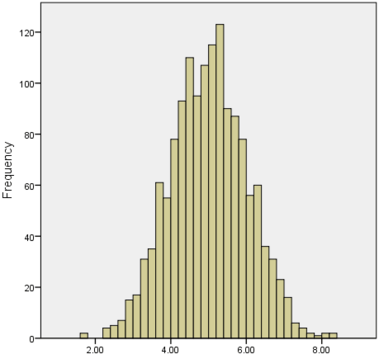

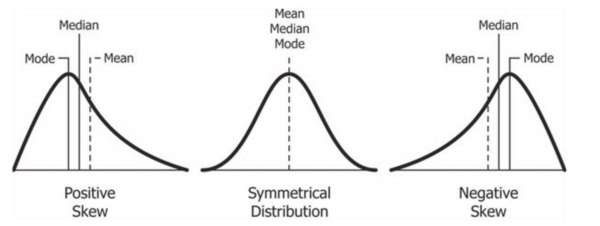

If we consider the normal distribution - as this is the most frequently assessed in statistics - when the data is perfectly normal, the mean, median, and mode are identical. Moreover, they all represent the most typical value in the data set. However, as the data becomes skewed the mean loses its ability to provide the best central location for the data. Therefore, in the case of skewed data, the median is typically the best measure of the central tendency because it is not as strongly influenced by the skewed values.

Median

The median is the middle score for a set of data that has been arranged in order of magnitude. The median is less affected by outliers and skewed data. In order to calculate the median, suppose we have the data below:

| 65 | 55 | 89 | 56 | 35 | 14 | 56 | 55 | 87 | 45 | 92 |

|---|

We first need to rearrange that data in order of magnitude:

| 14 | 35 | 45 | 55 | 55 | 56 | 56 | 65 | 87 | 89 | 92 |

|---|

Then, the Median is the middle score. In this case, 56. This works fine when you have an odd number of scores, but what happens when you have an even number of scores? What if you had only 10 scores? Well, you simply have to take the middle two scores and average the result. So, if we look at the example below:

| 65 | 55 | 89 | 56 | 35 | 14 | 56 | 55 | 87 | 45 |

|---|

| 14 | 35 | 45 | 55 | 55 | 56 | 56 | 65 | 87 | 89 |

|---|

We can now take the 5th and 6th scores and calculate the mean. So the Median would be 55.5.

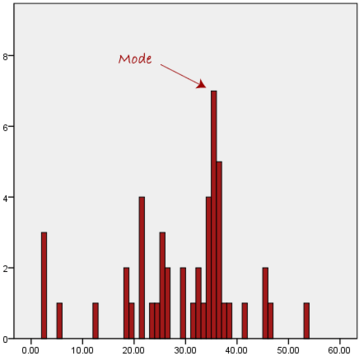

Mode

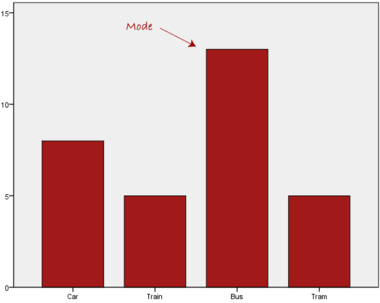

The mode is the most frequent score in our data set.

On a histogram, it represents the highest bar. For continuous variables, we usually define a bin size, so every bar in the histogram represent a range of values depending on the bin size

Normally, the mode is used for categorical data where we wish to know which is the most common category, as illustrated below:

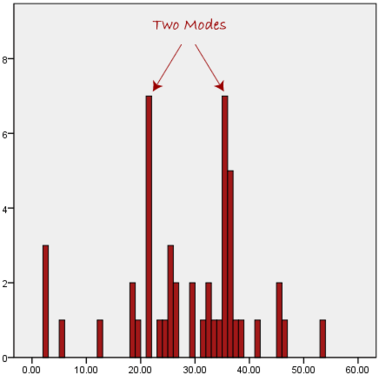

We can see above that the most common form of transport, in this particular data set, is the bus. However, one of the problems with the mode is that it is not unique, so it leaves us with problems when we have two or more values that share the highest frequency, such as below:

We are now stuck as to which mode best describes the central tendency of the data. This is particularly problematic when we have continuous data because we are more likely not to have anyone value that is more frequent than the other. For example, consider measuring 30 peoples' weight (to the nearest 0.1 kg). How likely is it that we will find two or more people with exactly the same weight (e.g., 67.4 kg)? The answer, is probably very unlikely - many people might be close, but with such a small sample (30 people) and a large range of possible weights, you are unlikely to find two people with exactly the same weight; that is, to the nearest 0.1 kg. This is why the mode is very rarely used with continuous data.

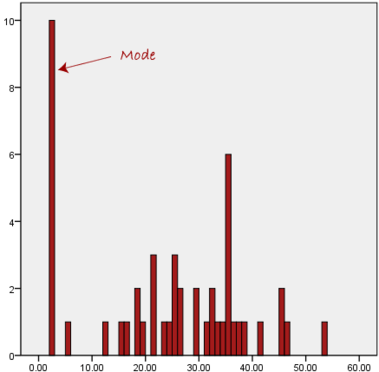

Another problem with the mode is that it will not provide us with a very good measure of central tendency when the most common mark is far away from the rest of the data in the data set, as depicted in the diagram below:

In the above diagram the mode has a value of 2. We can clearly see, however, that the mode is not representative of the data, which is mostly concentrated around the 20 to 30 value range. To use the mode to describe the central tendency of this data set would be misleading.

Skewed Distributions and the Mean and Median

We often test whether our data is normally distributed because this is a common assumption underlying many statistical tests. An example of a normally distributed set of data is presented below:

When you have a normally distributed sample you can legitimately use both the mean or the median as your measure of central tendency. In fact, in any symmetrical distribution the mean, median and mode are equal. However, in this situation, the mean is widely preferred as the best measure of central tendency because it is the measure that includes all the values in the data set for its calculation, and any change in any of the scores will affect the value of the mean. This is not the case with the median or mode.

However, when our data is skewed, for example, as with the right-skewed data set below:

we find that the mean is being dragged in the direct of the skew. In these situations, the median is generally considered to be the best representative of the central location of the data. The more skewed the distribution, the greater the difference between the median and mean, and the greater emphasis should be placed on using the median as opposed to the mean. A classic example of the above right-skewed distribution is income (salary), where higher-earners provide a false representation of the typical income if expressed as a mean and not a median.

If dealing with a normal distribution, and tests of normality show that the data is non-normal, it is customary to use the median instead of the mean. However, this is more a rule of thumb than a strict guideline. Sometimes, researchers wish to report the mean of a skewed distribution if the median and mean are not appreciably different (a subjective assessment), and if it allows easier comparisons to previous research to be made.

Summary of when to use the mean, median and mode

Please use the following summary table to know what the best measure of central tendency is with respect to the different types of variable:

| Type of Variable | Best measure of central tendency |

|---|---|

| Nominal | Mode |

| Ordinal | Median |

| Interval/Ratio (not skewed) | Mean |

| Interval/Ratio (skewed) | Median |

For answers to frequently asked questions about measures of central tendency, please go to: https://statistics.laerd.com/statistical-guides/measures-central-tendency-mean-mode-median-faqs.php

Measures of Variation

The Variation or Variability is a measure of the spread of the data (of a variable) or a measure of how widely distributed are the values around the mean | the deviation of a variable from its mean.

Range

The range is just composed of the min and max values of a variable.

Range can be used on Ordinal, Ratio and Interval scales

Quartile

https://statistics.laerd.com/statistical-guides/measures-of-spread-range-quartiles.php

The Quartile is a measure of the spread of a data set. To calculate the Quartile we follow the same logic of the Median. Remember that when calculating the Median, we first sort the data from the lowest to the highest value, so the Median is the value in the middle of the sorted data. In the case of the Quartile, we also sort the data from the lowest to the highest value but we break the data set into quarters, and we take 3 values to describe the data. The value corresponding to the 25% of the data, the one corresponding to the 50% (which is the Median), and the one corresponding to the 75% of the data.

A first example:

[2 3 9 1 9 3 5 2 5 11 3]

Sorting the data from the lowest to the highest value:

25% 50% 75% [1 2 "2" 3 3 "3" 5 5 "9" 9 11]

The Quartile is [2 3 9]

Another example. Consider the marks of 100 students who have been ordered from the lowest to the highest scores.

- The first quartile (Q1): Lies between the 25th and 26th student's marks.

- So, if the 25th and 26th student's marks are 45 and 45, respectively:

- (Q1) = (45 + 45) ÷ 2 = 45

- So, if the 25th and 26th student's marks are 45 and 45, respectively:

- The second quartile (Q2): Lies between the 50th and 51st student's marks.

- If the 50th and 51st student's marks are 58 and 59, respectively:

- (Q2) = (58 + 59) ÷ 2 = 58.5

- If the 50th and 51st student's marks are 58 and 59, respectively:

- The third quartile (Q3): Lies between the 75th and 76th student's marks.

- If the 75th and 76th student's marks are 71 and 71, respectively:

- (Q3) = (71 + 71) ÷ 2 = 71

- If the 75th and 76th student's marks are 71 and 71, respectively:

In the above example, we have an even number of scores (100 students, rather than an odd number, such as 99 students). This means that when we calculate the quartiles, we take the sum of the two scores around each quartile and then half them (hence Q1= (45 + 45) ÷ 2 = 45) . However, if we had an odd number of scores (say, 99 students), we would only need to take one score for each quartile (that is, the 25th, 50th and 75th scores). You should recognize that the second quartile is also the median.

Quartiles are a useful measure of spread because they are much less affected by outliers or a skewed data set than the equivalent measures of mean and standard deviation. For this reason, quartiles are often reported along with the median as the best choice of measure of spread and central tendency, respectively, when dealing with skewed and/or data with outliers. A common way of expressing quartiles is as an interquartile range. The interquartile range describes the difference between the third quartile (Q3) and the first quartile (Q1), telling us about the range of the middle half of the scores in the distribution. Hence, for our 100 students:

However, it should be noted that in journals and other publications you will usually see the interquartile range reported as 45 to 71, rather than the calculated

A slight variation on this is the which is half the Hence, for our 100 students:

Box Plots

boxplot(iris$Sepal.Length,

col = "blue",

main="iris dataset",

ylab = "Sepal Length")

Variance

https://statistics.laerd.com/statistical-guides/measures-of-spread-absolute-deviation-variance.php

The variance is a measure of the deviation of a variable from the mean.

The deviation of a value is:

- Unlike the Absolute deviation, which uses the absolute value of the deviation in order to "rid itself" of the negative values, the variance achieves positive values by squaring the deviation of each value.

Standard Deviation

https://statistics.laerd.com/statistical-guides/measures-of-spread-standard-deviation.php

The Standard Deviation is the square root of the variance. This measure is the most widely used to express deviation from the mean in a variable.

- Population standard deviation ()

- Sample standard deviation formula ()

Sometimes our data is only a sample of the whole population. In this case, we can still estimate the Standard deviation; but when we use a sample as an estimate of the whole population, the Standard deviation formula changes to this:

See Bessel's correction: https://en.wikipedia.org/wiki/Bessel%27s_correction

- A value of zero means that there is no variability; All the numbers in the data set are the same.

- A higher standard deviation indicates more widely distributed values around the mean.

- Assuming the frequency distributions approximately normal, about of all observations are within and standard deviation from the mean.

Z Score

Z-Score represents how far from the mean a particular value is based on the number of standard deviations. In other words, a z-score tells us how many standard deviations away a value is from the mean.

Z-Scores are also known as standardized residuals.

Note: mean and standard deviation are sensitive to outliers.

We use the following formula to calculate a z-score. https://www.statology.org/z-score-python/

- is a single raw data value

- is the population mean

- is the population standard deviation

In Python:

scipy.stats.zscore(a, axis=0, ddof=0, nan_policy='propagate') https://docs.scipy.org/doc/scipy/reference/generated/scipy.stats.zscore.html

Compute the z score of each value in the sample, relative to the sample mean and standard deviation.

a = np.array([ 0.7972, 0.0767, 0.4383, 0.7866, 0.8091,

0.1954, 0.6307, 0.6599, 0.1065, 0.0508])

from scipy import stats

stats.zscore(a)

Output:

array([ 1.12724554, -1.2469956 , -0.05542642, 1.09231569, 1.16645923,

-0.8558472 , 0.57858329, 0.67480514, -1.14879659, -1.33234306])

Shape of Distribution

The shape of the distribution of a variable is visualized by building a Probability distribution plot or a histogram. There are also some numerical measures (like the Skewness and the Kurtosis) that provide ways of describing, by a simple value, some features of the shape the distribution of a variable. [Adelo]

Probability distribution

No estoy seguro de cuales son los terminos correctos de este tipo de gráficos (Density - Distribution plots)

https://en.wikipedia.org/wiki/Probability_distribution#Continuous_probability_distribution

https://en.wikipedia.org/wiki/Probability_density_function

https://towardsdatascience.com/histograms-and-density-plots-in-python-f6bda88f5ac0

The Normal Distribution

https://www.youtube.com/watch?v=rzFX5NWojp0

https://en.wikipedia.org/wiki/Normal_distribution

Histograms

.

Skewness

https://en.wikipedia.org/wiki/Skewness

https://www.investopedia.com/terms/s/skewness.asp

https://towardsdatascience.com/histograms-and-density-plots-in-python-f6bda88f5ac0

https://docs.scipy.org/doc/scipy/reference/generated/scipy.stats.skew.html

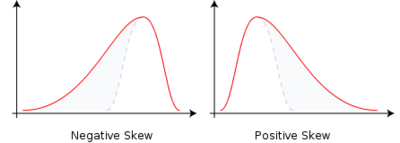

Skewness is a method for quantifying the lack of symmetry in the probability distribution of a variable.

- Skewness = 0 : Normally distributed.

- Skewness < 0 : Negative skew: The left tail is longer. The mass of the distribution is concentrated on the right of the figure. The distribution is said to be left-skewed, left-tailed, or skewed to the left, despite the fact that the curve itself appears to be skewed or leaning to the right; left instead refers to the left tail being drawn out and, often, the mean being skewed to the left of a typical center of the data. A left-skewed distribution usually appears as a right-leaning curve. https://en.wikipedia.org/wiki/Skewness

- Skewness > 0 : Positive skew : The right tail is longer. the mass of the distribution is concentrated on the left of the figure. The distribution is said to be right-skewed, right-tailed, or skewed to the right, despite the fact that the curve itself appears to be skewed or leaning to the left; right instead refers to the right tail being drawn out and, often, the mean being skewed to the right of a typical center of the data. A right-skewed distribution usually appears as a left-leaning curve.

Kurtosis

https://www.itl.nist.gov/div898/handbook/eda/section3/eda35b.htm

https://en.wikipedia.org/wiki/Kurtosis

https://www.simplypsychology.org/kurtosis.html

The kurtosis is a measure of the "tailedness" of the probability distribution. https://en.wikipedia.org/wiki/Kurtosis

We can say that Kurtosis is a measure of the concentration of values on the tail of the distribution. Which of course gives you an idea of the concentration of values on the peak of the distribution; but it is important to know that the measure provided by the kurtosis is related to the tail. [Adelo]

- The kurtosis of any univariate normal distribution is 3. A univariate normal distribution is usually called just normal distribution.

- Platykurtic: Kurtosis less than 3 (Negative Kurtosis if we talk about the adjusted version of Pearson's kurtosis, the Excess kurtosis).

- A negative value means that the distribution has a light tail compared to the normal distribution (which means that there is little data in the tail).

- An example of a platykurtic distribution is the uniform distribution, which does not produce outliers.

- Leptokurtic: Kurtosis greater than 3 (Positive Excess kurtosis).

- A positive Kurtosis tells that the distribution has a heavy tail (outlier), which means that there is a lot of data in the tail.

- An example of a leptokurtic distribution is the Laplace distribution, which has tails that asymptotically approach zero more slowly than a Gaussian and therefore produce more outliers than the normal distribution.

- This heaviness or lightness in the tails usually means that your data looks flatter (or less flat) compared to the normal distribution.

- It is also common practice to use the adjusted version of Pearson's kurtosis, the excess kurtosis, which is the kurtosis minus 3, to provide the comparison to the standard normal distribution. Some authors use "kurtosis" by itself to refer to the excess kurtosis. https://en.wikipedia.org/wiki/Kurtosis

- It must be noted that the Kurtosis is related to the tails of the distribution, not its peak; hence, the sometimes-seen characterization of kurtosis as "peakedness" is incorrect. https://en.wikipedia.org/wiki/Kurtosis

In Python: https://docs.scipy.org/doc/scipy/reference/generated/scipy.stats.kurtosis.html

scipy.stats.kurtosis(a, axis=0, fisher=True, bias=True, nan_policy='propagate')

- Compute the kurtosis (Fisher or Pearson) of a dataset.

import numpy as np

from scipy.stats import kurtosis

data = norm.rvs(size=1000, random_state=3)

data2 = np.random.randn(1000)

kurtosis(data2)

<syntaxhighlight lang="python3">

from scipy.stats import kurtosis

import matplotlib.pyplot as plt

import scipy.stats as stats

x = np.linspace(-5, 5, 100) ax = plt.subplot() distnames = ['laplace', 'norm', 'uniform']

for distname in distnames:

if distname == 'uniform':

dist = getattr(stats, distname)(loc=-2, scale=4)

else:

dist = getattr(stats, distname)

data = dist.rvs(size=1000)

kur = kurtosis(data, fisher=True)

y = dist.pdf(x)

ax.plot(x, y, label="{}, {}".format(distname, round(

![{\displaystyle [83.6,99.46,103.31,105,91]}](https://en.wikipedia.org/api/rest_v1/media/math/render/svg/489f40813b01336037dcb223827fa68fa306a659)

![{\displaystyle [-2,-1,0,1,2,3,4,5...]}](https://en.wikipedia.org/api/rest_v1/media/math/render/svg/c616ac21ce34d63e435e912b76bb0db9878927df)

![{\displaystyle [1,2,3,4,5,6]}](https://en.wikipedia.org/api/rest_v1/media/math/render/svg/588ac5db1eb89e7ab64f6e0bc72fe742a1d59a7f)

![{\displaystyle [...6,6.5,7,7.5...]}](https://en.wikipedia.org/api/rest_v1/media/math/render/svg/7876394106f8e1a3763a4df576358dee40704079)